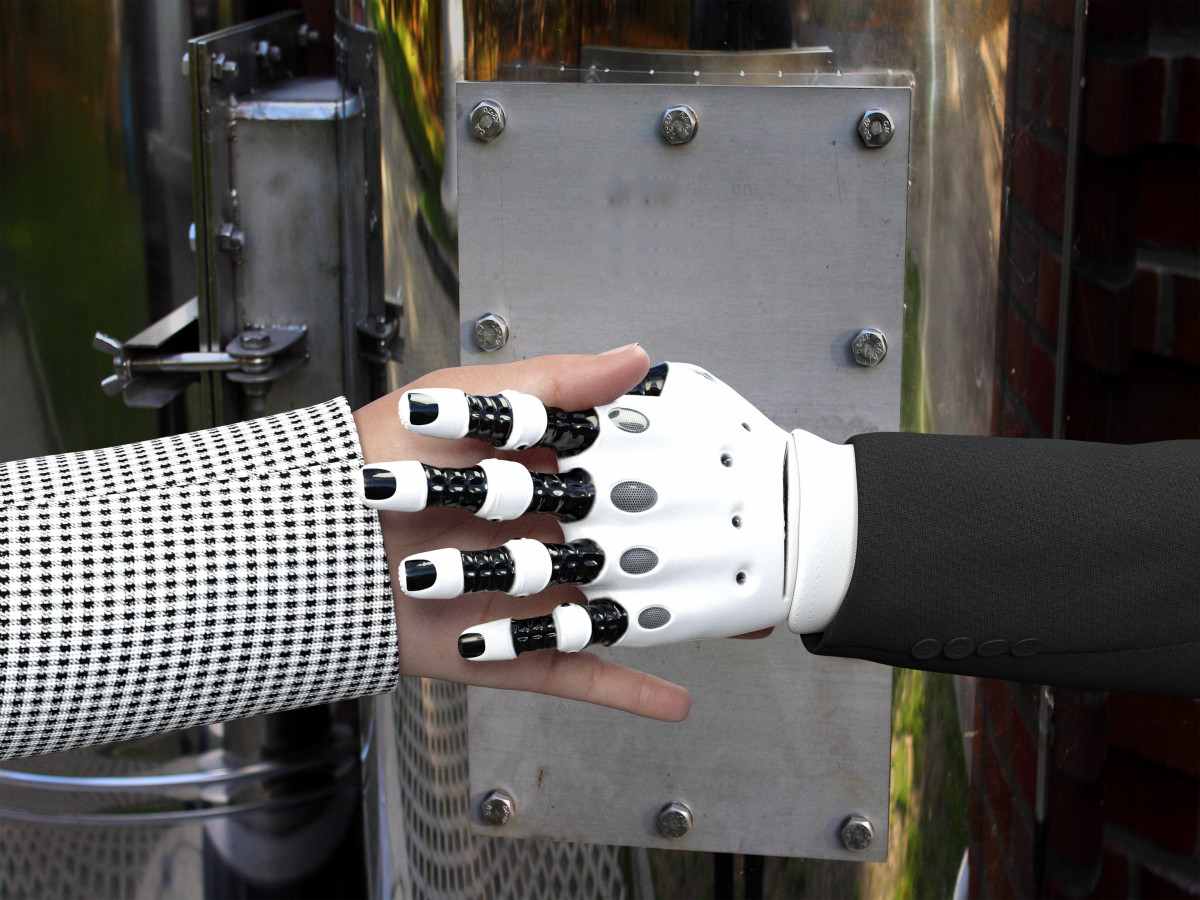

Artificial intelligence (AI) is no longer a futuristic concept; it is already reshaping everyday life, from the way we navigate our phones to the way we manage complex systems. In the military sphere, AI’s promise of increased efficiency and streamlined logistics is undeniable. Yet, the integration of AI into armed forces also brings significant risks and ethical dilemmas. To address these concerns, Senator Elissa Slotkin introduced the AI Guardrails Act, a legislative proposal that seeks to establish robust oversight mechanisms, ensuring that AI advancements serve national security without compromising safety or civil liberties.

The Promise and Peril of AI in Military Operations

Modern armed forces, especially the U.S. Air Force, are already leveraging AI across a range of functions. AI helps optimize supply‑chain routes, sift through vast amounts of intelligence data, and provide real‑time decision support for tactical commanders. These applications translate into faster response times, higher precision, and reduced human workload—critical advantages in high‑stakes environments.

However, the very nature of military operations—often involving life‑and‑death decisions—demands extraordinary caution. When AI is applied to weapon systems, particularly autonomous platforms, the potential for errors, system failures, or malicious manipulation becomes a grave concern. Imagine an autonomous drone that, without direct human authorization, initiates an attack. The consequences could be catastrophic: unintended civilian casualties, escalation of conflict, and a breach of international norms.

Beyond combat roles, AI can also be used for surveillance. While surveillance can enhance security, its application to domestic populations without clear legal frameworks and oversight opens the door to abuse and infringements on fundamental human rights. Striking a balance between harnessing AI’s benefits and protecting citizens from its potential harms is therefore essential.

Key Provisions of the AI Guardrails Act

The AI Guardrails Act is designed to embed human oversight into every stage of AI development and deployment within the military. Below are the core elements of the bill:

- Human‑in‑the‑Loop Requirement: All AI‑enabled weapon systems must have a clear human decision point before any lethal action is taken.

- Transparency and Documentation: Developers must maintain detailed logs of AI training data, decision‑making processes, and system performance metrics.

- Independent Audits: An external oversight body will conduct regular audits to verify compliance with safety standards and ethical guidelines.

- Accountability Framework: Clear lines of responsibility will be established for AI system failures, ensuring that commanders and developers can be held accountable.

- Privacy Safeguards: AI systems used for surveillance must adhere to strict data protection standards, limiting the collection and retention of civilian information.

- International Compliance: The Act aligns U.S. AI practices with international humanitarian law, ensuring that autonomous systems respect the principles of distinction, proportionality, and

Leave a Comment